Teach you to build a chatty AI by hand: DeepSeek fine-tuning full strategy

In this era where everyone is talking about AI, have you ever thought of hand-tuning an intelligent assistant that can talk? While ChatGPTs respond to all the questions with standard talk, we are more eager for an AI partner that can speak human language and has personality. Today, we will unveil the mystery of the big model fine-tuning and take you to witness how to make DeepSeek-7B learn to speak "human language".

I. AI fine-tuning: giving the big model an "elective course"

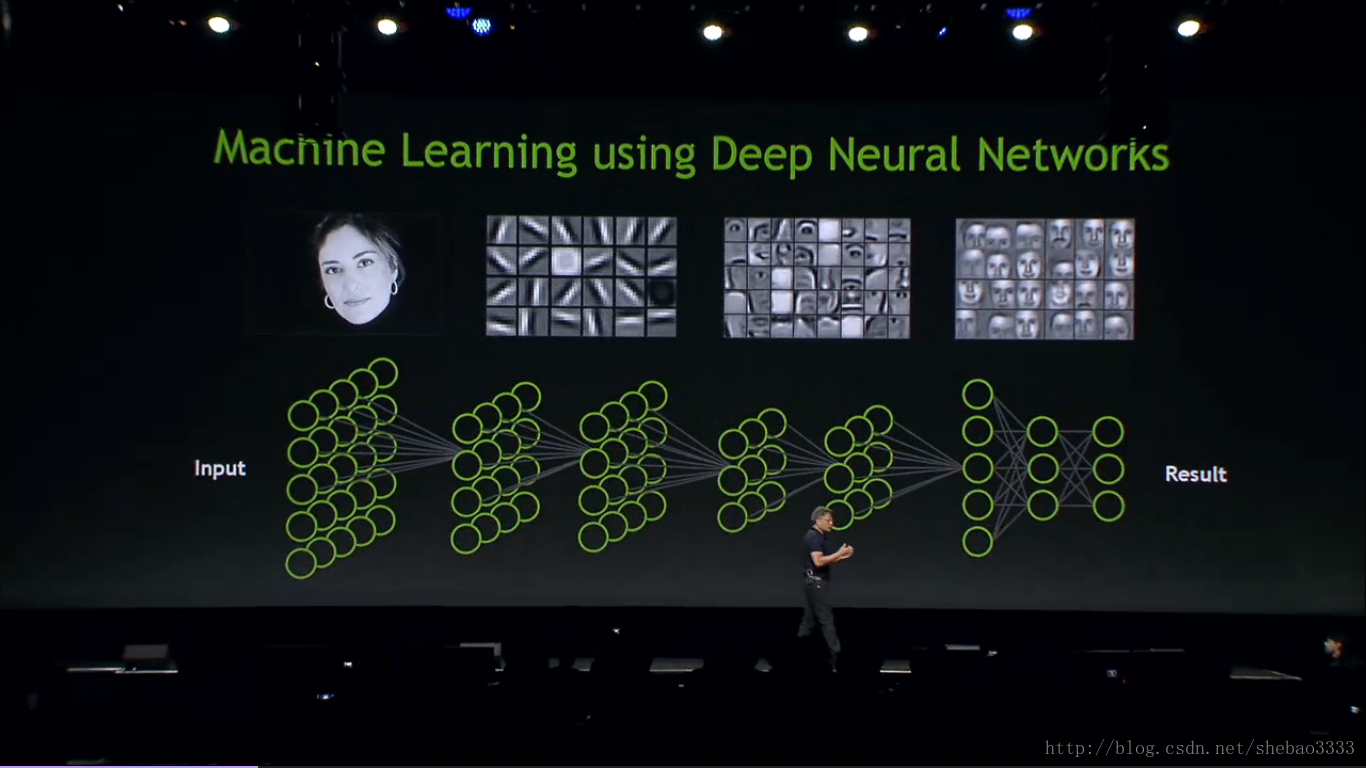

Just like giving the school bully to make up professional classes, fine-tuning allows general AI to transform into a domain expert.DeepSeek-7B was originally a superior student of general education, can answer astronomy and geography but do not understand "Zhen Huan body". With LoRA fine-tuning technology, we only need to adjust 0.5% of neurons (about 37.47 million parameters) to allow the model to acquire a specific style of conversation.

The magic of this technique is the "frozen pedagogy": retaining 90% of the model’s original cognition and inserting trainable "knowledge plug-ins" only at the attention mechanism layer (q_proj, v_proj, etc.). It is like adding thematic manuals to the library shelves, retaining the original collection and adding new customized content.

II. Building an AI’s "dialog script"

For AI to learn a specific conversation script, it needs to carefully design the training data. We use the golden triangle format of "instruction-input-output":

{

"instruction": "Now you are going to play as Zhen Huan, the woman next to the emperor", "input": "Who are you? ", "output".

"output": "My father is Zhen Yuandao, the junior secretary of the Da Lisi. "

}

This structured data acts as a script for a play, explicitly guiding the AI’s response strategy in different scenarios. When processing 30,000 single-round conversation data, the code encodes user questions and standard answers into a 384-dimensional sequence of tokens, like converting a conversation into Morse code that the AI can understand.

III, "black tech" at the training site

When practicing on a 3090 graphics card, several key technical points determine success or failure:

- Gradient Accumulation Trick: By accumulating gradients in 16 steps, the equivalent batch_size of 128 is realized, and the memory footprint is reduced by 70%

- Attention Masking Magic: Mask the lossy input computation with [-100] to ensure the AI focuses on learning the content of the response

- Mixed-precision alchemy: with fp16 precision, the model size is reduced by 50%, and the training speed is increased by 2x. The loss curve in the training log is like an electrocardiogram, and when the value decreases from 3.2 to 0.8, it means that the AI has already grasped the essence of the conversation. The whole process takes only 1 hour and consumes power equivalent to boiling 20 pots of water.

IV. Metamorphosis from technology to art

The fine-tuned model shows amazing changes. When the user asks "Why can’t you always do your job well", the original answer is the standard workplace advice, while the tuned AI will softly reassure: "Anxiety is like sand in the palm of your hand, the tighter you hold it, the faster you lose it. Why don’t you take three deep breaths first, and we’ll slowly clear our heads… "

In the legal advice scenario, the model fine-tuned with 500 pieces of specialized data can explain "the contractual validity of duress signing", not only listing Article 150 of the Civil Code, but also analogizing it to "like a charter agreement signed in a storm".v. ai tuning for everyone

.

.

Now even a home computer with only 16G of video memory can handle large model fine-tuning. The SwanLab monitoring platform, available from the open source community, makes the training process as intuitive as looking at a stock chart. When you press the start button on the Colab platform, you join this civilianized AI revolution.

From Donnie Darko to legal documents, from medical diagnosis to poetry writing, every vertical is waiting for an exclusive AI spokesperson. This is no longer the patent of big manufacturers, but a future within reach of every ordinary person with an idea – after all, training an AI that can chat is already less difficult than getting a barista’s license.